搜索到

12

篇与

Docker

的结果

-

![OpenClaw 完全指南:Docker 部署 · 记忆系统 · 多 Agent]() OpenClaw 完全指南:Docker 部署 · 记忆系统 · 多 Agent 基于 dhso/openclaw-docker 封装,适合快速部署个人 AI 助理。什么是 OpenClaw?OpenClaw 是一个开源的自托管个人 AI 助理网关,让你可以通过钉钉、飞书、QQ、Telegram、WhatsApp 等任意消息渠道与 AI 交互。你在自己的服务器上运行一个 Gateway 进程,它成为消息渠道和 AI 模型之间的桥梁。核心特点:自托管,数据完全自主,不经过第三方支持多渠道同时接入(钉钉/飞书/QQ/Telegram/WhatsApp/Discord)支持多 Agent 并行运行,每个 Agent 有独立人格和记忆内置分层记忆系统,AI 助理拥有真正的长期记忆通过 Skills 扩展能力,支持代码执行、浏览器自动化、图片生成等目录准备工作:VPS 选购与模型配置安全须知Docker 部署渠道接入分层记忆系统QMD 语义记忆搜索多 Agent 模式完整工作流示意推荐技能一、准备工作:VPS 选购与模型配置为什么推荐在 VPS 上用 Docker 部署?OpenClaw 是一个自托管的个人 AI 助理网关,它能执行 shell 命令、读写文件、发送消息,权限相当大。选择合适的部署方式直接影响安全性和稳定性。推荐 VPS + Docker 的理由:优势说明数据自主所有对话、记忆、API Key 都在自己的服务器上,不经过第三方网络稳定VPS 24 小时在线,不依赖本地网络和电脑开机状态容器隔离Docker 提供文件系统和进程隔离,限制 Agent 的"爆炸半径"持久化简单挂载 Volume 即可持久化,升级镜像不丢数据多 Agent 友好一台 VPS 可以跑多个 Agent,资源统一管理易于备份只需备份 /root/.openclaw 目录不推荐本地部署的原因: 本地机器关机即断线,IP 变动影响外部访问,且 Agent 有权限访问本地文件系统风险更高。推荐 VPS 方案RackNerd 黑色星期五促销(续费同价)内存CPU硬盘(SSD)流量带宽价格购买1G1核25G2T/月1Gbps$10.6/年购买2.5G2核45G3T/月1Gbps$18.66/年购买4G3核65G6.5T/月1Gbps$29.98/年购买6G5核100G10T/月1Gbps$44.98/年购买8G6核150G20T/月1Gbps$62.49/年购买多个机房可选,续费同价,适合长期运行 OpenClaw。个人使用推荐 2.5G 或 4G 套餐。推荐 AI 模型套餐OpenClaw 需要配置 AI 模型 API,推荐阿里云百炼系列:套餐适合人群链接AI 大模型入门套餐初次体验,低成本入门立即领取阿里云百炼 Coding Plan开发者,代码/对话场景立即领取阿里云 Coding Plan 接入 OpenClawCoding Plan 提供专属 API Key,通过 OpenAI 兼容接口接入 OpenClaw,支持 Qwen、Kimi、GLM、MiniMax 等多个主流模型,全部免费使用。第一步:获取 Coding Plan 专属 API Key前往 阿里云百炼控制台 获取 Coding Plan 专属 API Key(注意:不是普通百炼 API Key)。第二步:修改 OpenClaw 配置在 ~/.openclaw/openclaw.json 中添加以下配置(将 YOUR_API_KEY 替换为实际 Key):{ "models": { "mode": "merge", "providers": { "bailian": { "baseUrl": "https://coding.dashscope.aliyuncs.com/v1", "apiKey": "YOUR_API_KEY", "api": "openai-completions", "models": [ { "id": "qwen3.5-plus", "name": "qwen3.5-plus(通用对话)", "input": ["text", "image"], "contextWindow": 1000000, "maxTokens": 65536 }, { "id": "qwen3-coder-plus", "name": "qwen3-coder-plus(代码专用)", "contextWindow": 1000000, "maxTokens": 65536 }, { "id": "kimi-k2.5", "name": "kimi-k2.5(长上下文)", "input": ["text", "image"], "contextWindow": 262144, "maxTokens": 32768 } ] } } }, "agents": { "defaults": { "model": { "primary": "bailian/qwen3.5-plus" } } } }更多模型(glm-5、MiniMax-M2.5 等)见官方完整配置。⚠️ 不要直接全量替换配置文件,否则会覆盖已有的钉钉/飞书等渠道配置。请找到对应字段局部合并。第三步:重启生效openclaw gateway restart切换模型# 临时切换(当前会话有效) /model qwen3-coder-plus # 永久切换(修改配置文件中的 primary 字段)支持的模型列表模型特点qwen3.5-plus通用对话,支持图片,100万上下文qwen3-max-2026-01-23高质量推理qwen3-coder-next代码专用qwen3-coder-plus代码专用,100万上下文kimi-k2.5支持图片,长上下文glm-5 / glm-4.7智谱 GLM 系列MiniMax-M2.5MiniMax 系列📖 完整接入文档:阿里云帮助中心 - OpenClaw 接入 Coding Plan二、安全须知OpenClaw 的安全模型⚠️ OpenClaw 是个人助理安全模型,不是多租户隔离系统。一个 Gateway 对应一个信任边界(一个用户/一台 VPS)。核心原则:访问控制 > 模型智能大多数安全问题不是复杂攻击,而是"有人发消息,Bot 照做了"。OpenClaw 的防御思路:身份优先 — 谁能跟 Bot 说话(DM pairing / allowlist)范围其次 — Bot 能在哪里行动(工具权限、沙箱、群组限制)模型最后 — 假设模型可以被操控,设计上限制操控的影响范围关键安全配置1. 必须设置访问 Tokenopenclaw config set gateway.auth.token your-long-random-token不设置 Token,Gateway 拒绝所有 WebSocket 连接(fail-closed)。2. DM 访问策略{ channels: { dingtalk: { dmPolicy: "allowlist", // 只允许白名单用户 allowFrom: ["manager9327"] } } }pairing(默认):陌生人需要配对码审批allowlist:只允许白名单,陌生人直接拒绝open:任何人都能发消息(⚠️ 危险,慎用)3. Docker 防火墙(重要!)Docker 发布的端口会绕过 UFW 的 INPUT 规则,需要在 DOCKER-USER 链中配置:# /etc/ufw/after.rules 末尾追加 *filter :DOCKER-USER - [0:0] -A DOCKER-USER -m conntrack --ctstate ESTABLISHED,RELATED -j RETURN -A DOCKER-USER -s 127.0.0.0/8 -j RETURN -A DOCKER-USER -s 10.0.0.0/8 -j RETURN -A DOCKER-USER -s 192.168.0.0/16 -j RETURN -A DOCKER-USER -m conntrack --ctstate NEW -j DROP -A DOCKER-USER -j RETURN COMMIT然后 ufw reload 生效。💡 新手提示: 如果你的 VPS 只在局域网或内网使用,或者已经通过云服务商的安全组限制了端口访问,可以跳过此步骤。UFW + Docker 的防火墙配置主要针对公网暴露的场景。4. 工具权限最小化对于群聊 Agent 或多人共用的 Agent,限制危险工具:{ tools: { deny: ["gateway", "cron", "sessions_spawn", "sessions_send"] } }5. 沙箱隔离(可选)开启 Docker 沙箱,让工具执行在隔离容器内运行:{ agents: { defaults: { sandbox: { mode: "non-main", // 非主会话启用沙箱 scope: "session" } } } }6. 定期安全审计openclaw security audit openclaw security audit --deep openclaw security audit --fix # 自动修复部分问题Prompt Injection 防护AI 助理的特殊风险:攻击者可以通过消息内容操控模型执行恶意操作。降低风险的措施:保持 DM 白名单,不要对陌生人开放群聊中使用 requireMention: true,避免 Bot 响应所有消息对 web_fetch、browser 等读取外部内容的工具保持警惕使用最新、最强的模型:新一代模型对 prompt injection 的抵抗力显著更强文件权限chmod 700 ~/.openclaw chmod 600 ~/.openclaw/openclaw.json三、Docker 部署前置要求Docker 已安装开放端口 18789(Web 控制台)1. 初始化配置(首次运行)docker run --rm -it \ -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw onboard2. 配置网关# 本地网关模式 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.mode local # 绑定局域网 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.bind lan # 配置可信代理 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.trustedProxies '["127.0.0.1", "::1", "10.0.0.0/8"]' # 设置访问 Token docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.auth.token your_token # ⚠️ 2026.2.17+ 版本必须配置此项,否则启动失败 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ -v openclaw_cache:/root/.cache \ dhso/openclaw:latest \ openclaw config set gateway.controlUi.dangerouslyAllowHostHeaderOriginFallback true # 健康检查修复 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw doctor --fix3. 启动服务docker run -d \ --name claw \ -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ -v openclaw_cache:/root/.cache \ --restart=unless-stopped \ -p 18789:18789 \ dhso/openclaw:latest \ openclaw gateway run访问 http://ip:18789 打开 Web 控制台。4. 使用 docker-compose(推荐)比一堆 docker run 命令更易维护,推荐生产环境使用:# docker-compose.yml services: claw: image: dhso/openclaw:latest container_name: claw restart: unless-stopped environment: - TZ=Asia/Shanghai volumes: - openclaw_data:/root/.openclaw - openclaw_cache:/root/.cache ports: - "18789:18789" command: openclaw gateway run volumes: openclaw_data: openclaw_cache:# 启动 docker compose up -d # 查看日志 docker compose logs -f claw # 重启 docker compose restart claw # 停止 docker compose down数据持久化说明卷路径说明openclaw_data/root/.openclaw配置、插件、workspace、记忆文件openclaw_cache/root/.cache模型缓存(QMD 本地模型等)⚠️ 需要持久化的文件必须放在 /root/.openclaw 下,容器重启后其他目录会丢失。四、渠道接入OpenClaw 支持多种即时通讯渠道,通过渠道插件将 AI 助理接入你常用的平台。以下是目前支持的主要渠道及接入文档。支持的渠道渠道适用场景接入难度钉钉企业内部、团队协作⭐⭐⭐飞书企业内部、团队协作⭐⭐⭐QQ个人使用、小圈子⭐⭐Telegram个人使用、海外团队⭐WhatsApp个人使用、海外沟通⭐Discord开发者社区⭐⭐钉钉接入通过钉钉应用与机器人,实现用户与机器人对话,驱动 OpenClaw 完成相关任务。接入步骤概览:创建钉钉应用,获取 Client ID 和 Client Secret创建钉钉机器人(Stream 模式)创建卡片模板(AI 卡片,支持流式返回)申请权限:Card.Streaming.Write、Card.Instance.Write、qyapi_robot_sendmsg发布应用,添加机器人到群聊或单聊使用📖 详细接入文档:钉钉接入指南官方文档:OpenClaw 钉钉通道飞书接入通过飞书应用与机器人,实现用户通过飞书与机器人对话,驱动 OpenClaw 完成相关任务。接入步骤概览:在飞书开放平台创建企业自建应用,获取 App ID 和 App Secret配置应用权限(批量导入 JSON 权限配置)启用机器人能力,配置事件订阅(长连接模式,添加 im.message.receive_v1 事件)发布应用在飞书中找到机器人,发送消息获取配对码,运行 openclaw pairing approve feishu <配对码> 完成授权📖 详细接入文档:飞书接入指南官方文档:OpenClaw 飞书通道QQ 接入通过 QQ 机器人实现用户通过 QQ 与机器人对话,驱动 OpenClaw 完成相关任务。接入步骤概览:登录 QQ 开放平台,注册账号并绑定 QQ(不能直接用 QQ 账号登录)创建机器人,获取 AppID 和 AppSecret将 AppID 和 AppSecret 填入 OpenClaw 配置将 VPS 公网 IP 加入白名单(curl ifconfig.me 获取)配置沙箱环境,添加测试成员,扫码绑定手机 QQ📖 详细接入文档:QQ 接入指南通用配置说明所有渠道接入后,在 openclaw.json 中配置 binding 将渠道路由到对应 Agent:{ bindings: [ { agentId: "main", match: { channel: "dingtalk", accountId: "default" } }, { agentId: "main", match: { channel: "feishu", accountId: "default" } } ] }多渠道、多账号的路由规则详见第六章:多 Agent 模式。五、分层记忆系统OpenClaw 的记忆系统基于文件系统实现,让 AI 助理拥有跨会话的长期记忆。记忆金字塔 核心记忆 MEMORY.md ← 关键人物、偏好、重要决策(主会话专用) ↑ 年度记忆 YYYY.md ← 年度里程碑、高度提炼 ↑ 月度记忆 YYYY-MM.md ← 月度重要事件、决策汇总 ↑ 每日记忆 YYYY-MM-DD.md ← 当日事件摘要(原始记录,不修改) ↑ 临时记忆 YYYY-MM-DD-HHMM.md ← 完整会话记录(30天后自动删除)文件结构workspace/ ├── MEMORY.md # 核心记忆(永久,主会话专用) └── memory/ ├── 2026.md # 年度记忆 ├── 2026-03.md # 月度记忆 ├── 2026-03-11.md # 每日记忆 └── 2026-03-11-1041.md # 临时会话记录(30天后删除)各层说明层级文件格式生命周期说明临时记忆YYYY-MM-DD-HHMM.md30天完整会话转录,系统自动生成每日记忆YYYY-MM-DD.md永久当日重要事件摘要,原始记录不修改月度记忆YYYY-MM.md永久月度汇总,可优化合并年度记忆YYYY.md永久年度提炼,高层次总结核心记忆MEMORY.md永久关键信息快速访问,仅主会话加载自动维护 Cron 任务每日凌晨 2:00 → 汇总临时记忆到每日记忆,清理 30 天前的临时文件 每周一凌晨 3:00 → 提取上周每日记忆重要内容到月度记忆 每月 1 号凌晨 4:00 → 提取上月月度记忆重要内容到年度记忆 每年 1 月 1 日凌晨 5:00 → 优化年度记忆,高度提炼# 查看当前 cron 任务 openclaw cron list # 添加每日记忆维护任务(示例) openclaw cron add \ --name "每日记忆维护" \ --schedule "cron 0 2 * * * @ Asia/Shanghai" \ --task "汇总昨天的临时记忆到每日记忆文件,清理30天前的临时记忆,更新QMD索引" \ --target isolated手动触发记忆维护如果 cron 任务从未运行过(状态为 idle),或者需要立即整理记忆,可以直接告诉 Agent:"帮我维护一次记忆,把最近的临时记忆汇总到每日记忆,并更新月度记忆"Agent 会读取所有临时记忆文件,提炼重要内容写入对应的每日/月度记忆文件,并更新 MEMORY.md。MEMORY.md 安全策略MEMORY.md 包含个人敏感信息,仅在主会话(私聊/DM)中加载,群聊和共享会话不会加载此文件,防止隐私泄露。六、QMD 语义记忆搜索QMD(Quantum Memory Database)是 OpenClaw 内置的 Markdown 语义搜索引擎。安装npm install -g @tobilu/qmd初始化索引cd ~/.openclaw/workspace qmd collection add . --name memory-files --mask "{MEMORY.md,memory/**/*.md}" qmd status三种搜索模式1. qmd search — BM25 全文检索(推荐,无 GPU 环境)速度快,无需 GPU,适合大多数 VPS 环境。qmd search "老板 钉钉" -c memory-files qmd search "数据库决策" -c memory-files --full qmd search "待办事项" -c memory-files --files -n 5原理:BM25 算法对关键词进行词频统计和逆文档频率加权,中文建议用关键词组合而非长句。2. qmd query — 混合语义搜索(需 GPU 或高性能 CPU)向量搜索 + 关键词搜索 + 重排序,理解自然语言语义,搜索质量最高。qmd query "上次讨论的技术选型结论是什么" -c memory-files qmd query "最近的重要决策" -c memory-files --min-score 0.7工作原理:Query Expansion → Vector Search → BM25 → Reranking(Qwen3-Reranker-0.6B)⚠️ 无 GPU 的低配 VPS 建议只用 qmd search3. qmd vsearch — 纯向量相似度搜索qmd vsearch "项目里程碑" -c memory-files -n 5精确读取qmd get memory/2026-02.md qmd get MEMORY.md:5 -l 10 qmd multi-get "memory/2026-*.md" -l 50典型流程用户问:"老板的钉钉 ID 是多少?" ↓ qmd search "老板 钉钉 ID" -c memory-files -n 3 ↓ 找到 MEMORY.md 第 5-8 行 ↓ qmd get MEMORY.md:5 -l 4 ↓ 返回答案索引维护qmd update # 新增文件后更新索引 qmd embed -f # 重新生成向量嵌入 qmd cleanup # 清理缓存 qmd status # 查看状态七、多 Agent 模式一个 Gateway 进程里运行多个完全隔离的 Agent,每个 Agent 有独立的 Workspace、Session Store、Skills 和 Auth。核心概念概念说明agentIdAgent 唯一标识,对应一套 workspace + sessionaccountId渠道账号实例(如不同钉钉机器人)binding路由规则,决定消息发给哪个 Agent路由优先级(从高到低)peer 精确匹配(指定 DM/群组 ID)parentPeer 匹配(线程继承)guildId + roles(Discord 角色路由)accountId 匹配(指定渠道账号)渠道级匹配(accountId: "*")默认 Agent(default: true 或第一个)配置示例{ agents: { list: [ { id: "main", default: true, workspace: "~/.openclaw/workspace" }, { id: "work", workspace: "~/.openclaw/workspace-work", agentDir: "~/.openclaw/agents/work/agent" } ] }, bindings: [ { agentId: "main", match: { channel: "whatsapp", accountId: "personal" } }, { agentId: "work", match: { channel: "whatsapp", accountId: "biz" } } ] }我这边的实际配置(3 个 Agent)通过钉钉不同机器人账号路由到不同 Agent:Agent ID名字钉钉账号Workspacemain小海狮xiaohaishiworkspace/xiaolongxia小龙虾xiaolongxiaworkspace-xiaolongxia/xiaopangxie小螃蟹xiaopangxieworkspace-xiaopangxie/{ bindings: [ { agentId: "main", match: { channel: "dingtalk", accountId: "xiaohaishi" } }, { agentId: "xiaopangxie", match: { channel: "dingtalk", accountId: "xiaopangxie" } }, { agentId: "xiaolongxia", match: { channel: "dingtalk", accountId: "xiaolongxia" } } ] }每个 Agent 完全独立:独立人格文件(SOUL.md/IDENTITY.md)、独立记忆、独立技能、独立 cron 任务。添加新 Agentopenclaw agents add <agentId> openclaw agents list --bindingsper-Agent 工具限制(适合多人共用){ agents: { list: [{ id: "family", sandbox: { mode: "all", scope: "agent" }, tools: { allow: ["read", "exec", "sessions_list"], deny: ["write", "edit", "browser"] } }] } }Agent 间通信(默认关闭){ tools: { agentToAgent: { enabled: true, allow: ["main", "xiaolongxia", "xiaopangxie"] } } }开启后 Agent 可通过 sessions_send 工具互相协作。八、完整工作流示意用户发消息(钉钉/WhatsApp/Telegram) ↓ Gateway 根据 binding 路由到对应 Agent ↓ Agent 启动,读取 SOUL.md / USER.md / 今日记忆 / MEMORY.md ↓ 处理请求,必要时调用 qmd search 检索历史记忆 ↓ 完成任务,将重要信息写入 memory/YYYY-MM-DD.md ↓ 凌晨 2:00 cron 自动汇总 → 每日记忆 ↓ 每周一 3:00 cron 自动汇总 → 月度记忆 ↓ 每月 1 号 4:00 cron 自动汇总 → 年度记忆九、推荐技能OpenClaw 的技能(Skills)是模块化的能力扩展包,放在 workspace/skills/ 或 ~/.openclaw/skills/ 下,Agent 启动时自动加载。这里重点介绍两个让 Agent 持续进化的核心技能。self-improvement — 持续自我改进技能名: self-improvement作用: 让 Agent 记录错误、纠正和学习,实现跨会话的持续改进。触发场景情况动作命令执行失败记录到 .learnings/ERRORS.md用户纠正 Agent记录到 .learnings/LEARNINGS.md(category: correction)用户要求不存在的功能记录到 .learnings/FEATURE_REQUESTS.md发现更好的方法记录到 .learnings/LEARNINGS.md(category: best_practice)文件结构workspace/ └── .learnings/ ├── LEARNINGS.md # 纠正、知识盲区、最佳实践 ├── ERRORS.md # 命令失败、异常 └── FEATURE_REQUESTS.md # 用户请求的新功能记录格式示例## [LRN-20260311-001] correction **Logged**: 2026-03-11T10:00:00Z **Priority**: medium **Status**: pending **Area**: config ### Summary 交付物应放在 agent_temp 目录下,不是 docs/ ### Details 用户纠正:任务产物必须放在 agent_temp/<任务名-日期>/ 下 ### Suggested Action 在 AGENTS.md 中强化此规则,每次任务前检查晋升机制当某条学习记录具有普遍意义时,可以"晋升"到长期记忆:目标文件适合内容SOUL.md行为准则AGENTS.md工作流规范MEMORY.md重要经验教训TOOLS.md工具使用注意事项晋升后将条目状态改为 promoted,注明目标文件。快速查看待处理记录grep -h "Status**: pending" .learnings/*.md | wc -l grep -B5 "Priority**: high" .learnings/*.md | grep "^## \["skill-creator — 技能创建向导技能名: skill-creator作用: 引导 Agent 创建新技能,将重复性工作封装成可复用的技能包。什么是技能?技能是"领域专家的入职指南"——把特定领域的工作流、工具集成、领域知识打包,让 Agent 从通用助理变成专业助理。技能结构skill-name/ ├── SKILL.md # 必须,包含 frontmatter + 使用说明 ├── scripts/ # 可执行脚本(Python/Bash) ├── references/ # 参考文档(按需加载) └── assets/ # 输出资源(模板、图片等)SKILL.md frontmatter 示例:--- name: my-skill description: "做什么、什么时候用。Use when: (1) 场景一, (2) 场景二" ---⚠️ description 是技能触发的核心,必须清晰描述"什么时候用"。创建流程1. 明确需求 → 收集具体使用场景 2. 规划内容 → 确定需要哪些 scripts/references/assets 3. 初始化 → python3 scripts/init_skill.py <skill-name> --path ./skills/ 4. 编写内容 → 实现脚本、写 SKILL.md 5. 打包 → python3 scripts/package_skill.py ./skills/<skill-name> 6. 迭代 → 实际使用后持续优化设计原则简洁优先:上下文窗口是公共资源,只写 Agent 不知道的内容渐进式披露:SKILL.md 控制在 500 行内,详细内容放 references/代码优于描述:能用脚本解决的,不要让 Agent 反复推理安装技能# 从 ClawHub 安装 clawhub install <skill-name> # 本地打包安装 python3 scripts/package_skill.py ./skills/my-skill cp my-skill.skill ~/.openclaw/skills/其他实用技能推荐技能名作用安装qmd语义记忆搜索,详见第六章内置browser-automationPlaywright 浏览器自动化,网页抓取、表单填写、截图clawhub install browser-automationweb-content-fetcher抓取 JS 渲染页面内容,突破反爬限制clawhub install web-content-fetchergithubGitHub Issues/PR/CI 管理,gh CLI 封装clawhub install githubnano-banana-proAI 图片生成(Gemini 3 Pro Image)clawhub install nano-banana-proclaude-codeClaude Code CLI,多文件代码重构和调试clawhub install claude-code📖 更多技能:ClawHub 技能市场相关资源资源链接Docker 镜像dhso/openclaw-dockerOpenClaw 官方文档https://docs.openclaw.ai多 Agent 文档https://docs.openclaw.ai/concepts/multi-agent安全配置文档https://docs.openclaw.ai/gateway/securityClawHub 技能市场https://clawhub.com无影灵构帮助中心https://docs-lincore.wuying.com阿里云 Coding Planhttps://help.aliyun.com/zh/model-studio/openclaw-coding-planOpenClaw 社区 Discordhttps://discord.com/invite/clawd

OpenClaw 完全指南:Docker 部署 · 记忆系统 · 多 Agent 基于 dhso/openclaw-docker 封装,适合快速部署个人 AI 助理。什么是 OpenClaw?OpenClaw 是一个开源的自托管个人 AI 助理网关,让你可以通过钉钉、飞书、QQ、Telegram、WhatsApp 等任意消息渠道与 AI 交互。你在自己的服务器上运行一个 Gateway 进程,它成为消息渠道和 AI 模型之间的桥梁。核心特点:自托管,数据完全自主,不经过第三方支持多渠道同时接入(钉钉/飞书/QQ/Telegram/WhatsApp/Discord)支持多 Agent 并行运行,每个 Agent 有独立人格和记忆内置分层记忆系统,AI 助理拥有真正的长期记忆通过 Skills 扩展能力,支持代码执行、浏览器自动化、图片生成等目录准备工作:VPS 选购与模型配置安全须知Docker 部署渠道接入分层记忆系统QMD 语义记忆搜索多 Agent 模式完整工作流示意推荐技能一、准备工作:VPS 选购与模型配置为什么推荐在 VPS 上用 Docker 部署?OpenClaw 是一个自托管的个人 AI 助理网关,它能执行 shell 命令、读写文件、发送消息,权限相当大。选择合适的部署方式直接影响安全性和稳定性。推荐 VPS + Docker 的理由:优势说明数据自主所有对话、记忆、API Key 都在自己的服务器上,不经过第三方网络稳定VPS 24 小时在线,不依赖本地网络和电脑开机状态容器隔离Docker 提供文件系统和进程隔离,限制 Agent 的"爆炸半径"持久化简单挂载 Volume 即可持久化,升级镜像不丢数据多 Agent 友好一台 VPS 可以跑多个 Agent,资源统一管理易于备份只需备份 /root/.openclaw 目录不推荐本地部署的原因: 本地机器关机即断线,IP 变动影响外部访问,且 Agent 有权限访问本地文件系统风险更高。推荐 VPS 方案RackNerd 黑色星期五促销(续费同价)内存CPU硬盘(SSD)流量带宽价格购买1G1核25G2T/月1Gbps$10.6/年购买2.5G2核45G3T/月1Gbps$18.66/年购买4G3核65G6.5T/月1Gbps$29.98/年购买6G5核100G10T/月1Gbps$44.98/年购买8G6核150G20T/月1Gbps$62.49/年购买多个机房可选,续费同价,适合长期运行 OpenClaw。个人使用推荐 2.5G 或 4G 套餐。推荐 AI 模型套餐OpenClaw 需要配置 AI 模型 API,推荐阿里云百炼系列:套餐适合人群链接AI 大模型入门套餐初次体验,低成本入门立即领取阿里云百炼 Coding Plan开发者,代码/对话场景立即领取阿里云 Coding Plan 接入 OpenClawCoding Plan 提供专属 API Key,通过 OpenAI 兼容接口接入 OpenClaw,支持 Qwen、Kimi、GLM、MiniMax 等多个主流模型,全部免费使用。第一步:获取 Coding Plan 专属 API Key前往 阿里云百炼控制台 获取 Coding Plan 专属 API Key(注意:不是普通百炼 API Key)。第二步:修改 OpenClaw 配置在 ~/.openclaw/openclaw.json 中添加以下配置(将 YOUR_API_KEY 替换为实际 Key):{ "models": { "mode": "merge", "providers": { "bailian": { "baseUrl": "https://coding.dashscope.aliyuncs.com/v1", "apiKey": "YOUR_API_KEY", "api": "openai-completions", "models": [ { "id": "qwen3.5-plus", "name": "qwen3.5-plus(通用对话)", "input": ["text", "image"], "contextWindow": 1000000, "maxTokens": 65536 }, { "id": "qwen3-coder-plus", "name": "qwen3-coder-plus(代码专用)", "contextWindow": 1000000, "maxTokens": 65536 }, { "id": "kimi-k2.5", "name": "kimi-k2.5(长上下文)", "input": ["text", "image"], "contextWindow": 262144, "maxTokens": 32768 } ] } } }, "agents": { "defaults": { "model": { "primary": "bailian/qwen3.5-plus" } } } }更多模型(glm-5、MiniMax-M2.5 等)见官方完整配置。⚠️ 不要直接全量替换配置文件,否则会覆盖已有的钉钉/飞书等渠道配置。请找到对应字段局部合并。第三步:重启生效openclaw gateway restart切换模型# 临时切换(当前会话有效) /model qwen3-coder-plus # 永久切换(修改配置文件中的 primary 字段)支持的模型列表模型特点qwen3.5-plus通用对话,支持图片,100万上下文qwen3-max-2026-01-23高质量推理qwen3-coder-next代码专用qwen3-coder-plus代码专用,100万上下文kimi-k2.5支持图片,长上下文glm-5 / glm-4.7智谱 GLM 系列MiniMax-M2.5MiniMax 系列📖 完整接入文档:阿里云帮助中心 - OpenClaw 接入 Coding Plan二、安全须知OpenClaw 的安全模型⚠️ OpenClaw 是个人助理安全模型,不是多租户隔离系统。一个 Gateway 对应一个信任边界(一个用户/一台 VPS)。核心原则:访问控制 > 模型智能大多数安全问题不是复杂攻击,而是"有人发消息,Bot 照做了"。OpenClaw 的防御思路:身份优先 — 谁能跟 Bot 说话(DM pairing / allowlist)范围其次 — Bot 能在哪里行动(工具权限、沙箱、群组限制)模型最后 — 假设模型可以被操控,设计上限制操控的影响范围关键安全配置1. 必须设置访问 Tokenopenclaw config set gateway.auth.token your-long-random-token不设置 Token,Gateway 拒绝所有 WebSocket 连接(fail-closed)。2. DM 访问策略{ channels: { dingtalk: { dmPolicy: "allowlist", // 只允许白名单用户 allowFrom: ["manager9327"] } } }pairing(默认):陌生人需要配对码审批allowlist:只允许白名单,陌生人直接拒绝open:任何人都能发消息(⚠️ 危险,慎用)3. Docker 防火墙(重要!)Docker 发布的端口会绕过 UFW 的 INPUT 规则,需要在 DOCKER-USER 链中配置:# /etc/ufw/after.rules 末尾追加 *filter :DOCKER-USER - [0:0] -A DOCKER-USER -m conntrack --ctstate ESTABLISHED,RELATED -j RETURN -A DOCKER-USER -s 127.0.0.0/8 -j RETURN -A DOCKER-USER -s 10.0.0.0/8 -j RETURN -A DOCKER-USER -s 192.168.0.0/16 -j RETURN -A DOCKER-USER -m conntrack --ctstate NEW -j DROP -A DOCKER-USER -j RETURN COMMIT然后 ufw reload 生效。💡 新手提示: 如果你的 VPS 只在局域网或内网使用,或者已经通过云服务商的安全组限制了端口访问,可以跳过此步骤。UFW + Docker 的防火墙配置主要针对公网暴露的场景。4. 工具权限最小化对于群聊 Agent 或多人共用的 Agent,限制危险工具:{ tools: { deny: ["gateway", "cron", "sessions_spawn", "sessions_send"] } }5. 沙箱隔离(可选)开启 Docker 沙箱,让工具执行在隔离容器内运行:{ agents: { defaults: { sandbox: { mode: "non-main", // 非主会话启用沙箱 scope: "session" } } } }6. 定期安全审计openclaw security audit openclaw security audit --deep openclaw security audit --fix # 自动修复部分问题Prompt Injection 防护AI 助理的特殊风险:攻击者可以通过消息内容操控模型执行恶意操作。降低风险的措施:保持 DM 白名单,不要对陌生人开放群聊中使用 requireMention: true,避免 Bot 响应所有消息对 web_fetch、browser 等读取外部内容的工具保持警惕使用最新、最强的模型:新一代模型对 prompt injection 的抵抗力显著更强文件权限chmod 700 ~/.openclaw chmod 600 ~/.openclaw/openclaw.json三、Docker 部署前置要求Docker 已安装开放端口 18789(Web 控制台)1. 初始化配置(首次运行)docker run --rm -it \ -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw onboard2. 配置网关# 本地网关模式 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.mode local # 绑定局域网 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.bind lan # 配置可信代理 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.trustedProxies '["127.0.0.1", "::1", "10.0.0.0/8"]' # 设置访问 Token docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw config set gateway.auth.token your_token # ⚠️ 2026.2.17+ 版本必须配置此项,否则启动失败 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ -v openclaw_cache:/root/.cache \ dhso/openclaw:latest \ openclaw config set gateway.controlUi.dangerouslyAllowHostHeaderOriginFallback true # 健康检查修复 docker run --rm -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ dhso/openclaw:latest \ openclaw doctor --fix3. 启动服务docker run -d \ --name claw \ -e TZ=Asia/Shanghai \ -v openclaw_data:/root/.openclaw \ -v openclaw_cache:/root/.cache \ --restart=unless-stopped \ -p 18789:18789 \ dhso/openclaw:latest \ openclaw gateway run访问 http://ip:18789 打开 Web 控制台。4. 使用 docker-compose(推荐)比一堆 docker run 命令更易维护,推荐生产环境使用:# docker-compose.yml services: claw: image: dhso/openclaw:latest container_name: claw restart: unless-stopped environment: - TZ=Asia/Shanghai volumes: - openclaw_data:/root/.openclaw - openclaw_cache:/root/.cache ports: - "18789:18789" command: openclaw gateway run volumes: openclaw_data: openclaw_cache:# 启动 docker compose up -d # 查看日志 docker compose logs -f claw # 重启 docker compose restart claw # 停止 docker compose down数据持久化说明卷路径说明openclaw_data/root/.openclaw配置、插件、workspace、记忆文件openclaw_cache/root/.cache模型缓存(QMD 本地模型等)⚠️ 需要持久化的文件必须放在 /root/.openclaw 下,容器重启后其他目录会丢失。四、渠道接入OpenClaw 支持多种即时通讯渠道,通过渠道插件将 AI 助理接入你常用的平台。以下是目前支持的主要渠道及接入文档。支持的渠道渠道适用场景接入难度钉钉企业内部、团队协作⭐⭐⭐飞书企业内部、团队协作⭐⭐⭐QQ个人使用、小圈子⭐⭐Telegram个人使用、海外团队⭐WhatsApp个人使用、海外沟通⭐Discord开发者社区⭐⭐钉钉接入通过钉钉应用与机器人,实现用户与机器人对话,驱动 OpenClaw 完成相关任务。接入步骤概览:创建钉钉应用,获取 Client ID 和 Client Secret创建钉钉机器人(Stream 模式)创建卡片模板(AI 卡片,支持流式返回)申请权限:Card.Streaming.Write、Card.Instance.Write、qyapi_robot_sendmsg发布应用,添加机器人到群聊或单聊使用📖 详细接入文档:钉钉接入指南官方文档:OpenClaw 钉钉通道飞书接入通过飞书应用与机器人,实现用户通过飞书与机器人对话,驱动 OpenClaw 完成相关任务。接入步骤概览:在飞书开放平台创建企业自建应用,获取 App ID 和 App Secret配置应用权限(批量导入 JSON 权限配置)启用机器人能力,配置事件订阅(长连接模式,添加 im.message.receive_v1 事件)发布应用在飞书中找到机器人,发送消息获取配对码,运行 openclaw pairing approve feishu <配对码> 完成授权📖 详细接入文档:飞书接入指南官方文档:OpenClaw 飞书通道QQ 接入通过 QQ 机器人实现用户通过 QQ 与机器人对话,驱动 OpenClaw 完成相关任务。接入步骤概览:登录 QQ 开放平台,注册账号并绑定 QQ(不能直接用 QQ 账号登录)创建机器人,获取 AppID 和 AppSecret将 AppID 和 AppSecret 填入 OpenClaw 配置将 VPS 公网 IP 加入白名单(curl ifconfig.me 获取)配置沙箱环境,添加测试成员,扫码绑定手机 QQ📖 详细接入文档:QQ 接入指南通用配置说明所有渠道接入后,在 openclaw.json 中配置 binding 将渠道路由到对应 Agent:{ bindings: [ { agentId: "main", match: { channel: "dingtalk", accountId: "default" } }, { agentId: "main", match: { channel: "feishu", accountId: "default" } } ] }多渠道、多账号的路由规则详见第六章:多 Agent 模式。五、分层记忆系统OpenClaw 的记忆系统基于文件系统实现,让 AI 助理拥有跨会话的长期记忆。记忆金字塔 核心记忆 MEMORY.md ← 关键人物、偏好、重要决策(主会话专用) ↑ 年度记忆 YYYY.md ← 年度里程碑、高度提炼 ↑ 月度记忆 YYYY-MM.md ← 月度重要事件、决策汇总 ↑ 每日记忆 YYYY-MM-DD.md ← 当日事件摘要(原始记录,不修改) ↑ 临时记忆 YYYY-MM-DD-HHMM.md ← 完整会话记录(30天后自动删除)文件结构workspace/ ├── MEMORY.md # 核心记忆(永久,主会话专用) └── memory/ ├── 2026.md # 年度记忆 ├── 2026-03.md # 月度记忆 ├── 2026-03-11.md # 每日记忆 └── 2026-03-11-1041.md # 临时会话记录(30天后删除)各层说明层级文件格式生命周期说明临时记忆YYYY-MM-DD-HHMM.md30天完整会话转录,系统自动生成每日记忆YYYY-MM-DD.md永久当日重要事件摘要,原始记录不修改月度记忆YYYY-MM.md永久月度汇总,可优化合并年度记忆YYYY.md永久年度提炼,高层次总结核心记忆MEMORY.md永久关键信息快速访问,仅主会话加载自动维护 Cron 任务每日凌晨 2:00 → 汇总临时记忆到每日记忆,清理 30 天前的临时文件 每周一凌晨 3:00 → 提取上周每日记忆重要内容到月度记忆 每月 1 号凌晨 4:00 → 提取上月月度记忆重要内容到年度记忆 每年 1 月 1 日凌晨 5:00 → 优化年度记忆,高度提炼# 查看当前 cron 任务 openclaw cron list # 添加每日记忆维护任务(示例) openclaw cron add \ --name "每日记忆维护" \ --schedule "cron 0 2 * * * @ Asia/Shanghai" \ --task "汇总昨天的临时记忆到每日记忆文件,清理30天前的临时记忆,更新QMD索引" \ --target isolated手动触发记忆维护如果 cron 任务从未运行过(状态为 idle),或者需要立即整理记忆,可以直接告诉 Agent:"帮我维护一次记忆,把最近的临时记忆汇总到每日记忆,并更新月度记忆"Agent 会读取所有临时记忆文件,提炼重要内容写入对应的每日/月度记忆文件,并更新 MEMORY.md。MEMORY.md 安全策略MEMORY.md 包含个人敏感信息,仅在主会话(私聊/DM)中加载,群聊和共享会话不会加载此文件,防止隐私泄露。六、QMD 语义记忆搜索QMD(Quantum Memory Database)是 OpenClaw 内置的 Markdown 语义搜索引擎。安装npm install -g @tobilu/qmd初始化索引cd ~/.openclaw/workspace qmd collection add . --name memory-files --mask "{MEMORY.md,memory/**/*.md}" qmd status三种搜索模式1. qmd search — BM25 全文检索(推荐,无 GPU 环境)速度快,无需 GPU,适合大多数 VPS 环境。qmd search "老板 钉钉" -c memory-files qmd search "数据库决策" -c memory-files --full qmd search "待办事项" -c memory-files --files -n 5原理:BM25 算法对关键词进行词频统计和逆文档频率加权,中文建议用关键词组合而非长句。2. qmd query — 混合语义搜索(需 GPU 或高性能 CPU)向量搜索 + 关键词搜索 + 重排序,理解自然语言语义,搜索质量最高。qmd query "上次讨论的技术选型结论是什么" -c memory-files qmd query "最近的重要决策" -c memory-files --min-score 0.7工作原理:Query Expansion → Vector Search → BM25 → Reranking(Qwen3-Reranker-0.6B)⚠️ 无 GPU 的低配 VPS 建议只用 qmd search3. qmd vsearch — 纯向量相似度搜索qmd vsearch "项目里程碑" -c memory-files -n 5精确读取qmd get memory/2026-02.md qmd get MEMORY.md:5 -l 10 qmd multi-get "memory/2026-*.md" -l 50典型流程用户问:"老板的钉钉 ID 是多少?" ↓ qmd search "老板 钉钉 ID" -c memory-files -n 3 ↓ 找到 MEMORY.md 第 5-8 行 ↓ qmd get MEMORY.md:5 -l 4 ↓ 返回答案索引维护qmd update # 新增文件后更新索引 qmd embed -f # 重新生成向量嵌入 qmd cleanup # 清理缓存 qmd status # 查看状态七、多 Agent 模式一个 Gateway 进程里运行多个完全隔离的 Agent,每个 Agent 有独立的 Workspace、Session Store、Skills 和 Auth。核心概念概念说明agentIdAgent 唯一标识,对应一套 workspace + sessionaccountId渠道账号实例(如不同钉钉机器人)binding路由规则,决定消息发给哪个 Agent路由优先级(从高到低)peer 精确匹配(指定 DM/群组 ID)parentPeer 匹配(线程继承)guildId + roles(Discord 角色路由)accountId 匹配(指定渠道账号)渠道级匹配(accountId: "*")默认 Agent(default: true 或第一个)配置示例{ agents: { list: [ { id: "main", default: true, workspace: "~/.openclaw/workspace" }, { id: "work", workspace: "~/.openclaw/workspace-work", agentDir: "~/.openclaw/agents/work/agent" } ] }, bindings: [ { agentId: "main", match: { channel: "whatsapp", accountId: "personal" } }, { agentId: "work", match: { channel: "whatsapp", accountId: "biz" } } ] }我这边的实际配置(3 个 Agent)通过钉钉不同机器人账号路由到不同 Agent:Agent ID名字钉钉账号Workspacemain小海狮xiaohaishiworkspace/xiaolongxia小龙虾xiaolongxiaworkspace-xiaolongxia/xiaopangxie小螃蟹xiaopangxieworkspace-xiaopangxie/{ bindings: [ { agentId: "main", match: { channel: "dingtalk", accountId: "xiaohaishi" } }, { agentId: "xiaopangxie", match: { channel: "dingtalk", accountId: "xiaopangxie" } }, { agentId: "xiaolongxia", match: { channel: "dingtalk", accountId: "xiaolongxia" } } ] }每个 Agent 完全独立:独立人格文件(SOUL.md/IDENTITY.md)、独立记忆、独立技能、独立 cron 任务。添加新 Agentopenclaw agents add <agentId> openclaw agents list --bindingsper-Agent 工具限制(适合多人共用){ agents: { list: [{ id: "family", sandbox: { mode: "all", scope: "agent" }, tools: { allow: ["read", "exec", "sessions_list"], deny: ["write", "edit", "browser"] } }] } }Agent 间通信(默认关闭){ tools: { agentToAgent: { enabled: true, allow: ["main", "xiaolongxia", "xiaopangxie"] } } }开启后 Agent 可通过 sessions_send 工具互相协作。八、完整工作流示意用户发消息(钉钉/WhatsApp/Telegram) ↓ Gateway 根据 binding 路由到对应 Agent ↓ Agent 启动,读取 SOUL.md / USER.md / 今日记忆 / MEMORY.md ↓ 处理请求,必要时调用 qmd search 检索历史记忆 ↓ 完成任务,将重要信息写入 memory/YYYY-MM-DD.md ↓ 凌晨 2:00 cron 自动汇总 → 每日记忆 ↓ 每周一 3:00 cron 自动汇总 → 月度记忆 ↓ 每月 1 号 4:00 cron 自动汇总 → 年度记忆九、推荐技能OpenClaw 的技能(Skills)是模块化的能力扩展包,放在 workspace/skills/ 或 ~/.openclaw/skills/ 下,Agent 启动时自动加载。这里重点介绍两个让 Agent 持续进化的核心技能。self-improvement — 持续自我改进技能名: self-improvement作用: 让 Agent 记录错误、纠正和学习,实现跨会话的持续改进。触发场景情况动作命令执行失败记录到 .learnings/ERRORS.md用户纠正 Agent记录到 .learnings/LEARNINGS.md(category: correction)用户要求不存在的功能记录到 .learnings/FEATURE_REQUESTS.md发现更好的方法记录到 .learnings/LEARNINGS.md(category: best_practice)文件结构workspace/ └── .learnings/ ├── LEARNINGS.md # 纠正、知识盲区、最佳实践 ├── ERRORS.md # 命令失败、异常 └── FEATURE_REQUESTS.md # 用户请求的新功能记录格式示例## [LRN-20260311-001] correction **Logged**: 2026-03-11T10:00:00Z **Priority**: medium **Status**: pending **Area**: config ### Summary 交付物应放在 agent_temp 目录下,不是 docs/ ### Details 用户纠正:任务产物必须放在 agent_temp/<任务名-日期>/ 下 ### Suggested Action 在 AGENTS.md 中强化此规则,每次任务前检查晋升机制当某条学习记录具有普遍意义时,可以"晋升"到长期记忆:目标文件适合内容SOUL.md行为准则AGENTS.md工作流规范MEMORY.md重要经验教训TOOLS.md工具使用注意事项晋升后将条目状态改为 promoted,注明目标文件。快速查看待处理记录grep -h "Status**: pending" .learnings/*.md | wc -l grep -B5 "Priority**: high" .learnings/*.md | grep "^## \["skill-creator — 技能创建向导技能名: skill-creator作用: 引导 Agent 创建新技能,将重复性工作封装成可复用的技能包。什么是技能?技能是"领域专家的入职指南"——把特定领域的工作流、工具集成、领域知识打包,让 Agent 从通用助理变成专业助理。技能结构skill-name/ ├── SKILL.md # 必须,包含 frontmatter + 使用说明 ├── scripts/ # 可执行脚本(Python/Bash) ├── references/ # 参考文档(按需加载) └── assets/ # 输出资源(模板、图片等)SKILL.md frontmatter 示例:--- name: my-skill description: "做什么、什么时候用。Use when: (1) 场景一, (2) 场景二" ---⚠️ description 是技能触发的核心,必须清晰描述"什么时候用"。创建流程1. 明确需求 → 收集具体使用场景 2. 规划内容 → 确定需要哪些 scripts/references/assets 3. 初始化 → python3 scripts/init_skill.py <skill-name> --path ./skills/ 4. 编写内容 → 实现脚本、写 SKILL.md 5. 打包 → python3 scripts/package_skill.py ./skills/<skill-name> 6. 迭代 → 实际使用后持续优化设计原则简洁优先:上下文窗口是公共资源,只写 Agent 不知道的内容渐进式披露:SKILL.md 控制在 500 行内,详细内容放 references/代码优于描述:能用脚本解决的,不要让 Agent 反复推理安装技能# 从 ClawHub 安装 clawhub install <skill-name> # 本地打包安装 python3 scripts/package_skill.py ./skills/my-skill cp my-skill.skill ~/.openclaw/skills/其他实用技能推荐技能名作用安装qmd语义记忆搜索,详见第六章内置browser-automationPlaywright 浏览器自动化,网页抓取、表单填写、截图clawhub install browser-automationweb-content-fetcher抓取 JS 渲染页面内容,突破反爬限制clawhub install web-content-fetchergithubGitHub Issues/PR/CI 管理,gh CLI 封装clawhub install githubnano-banana-proAI 图片生成(Gemini 3 Pro Image)clawhub install nano-banana-proclaude-codeClaude Code CLI,多文件代码重构和调试clawhub install claude-code📖 更多技能:ClawHub 技能市场相关资源资源链接Docker 镜像dhso/openclaw-dockerOpenClaw 官方文档https://docs.openclaw.ai多 Agent 文档https://docs.openclaw.ai/concepts/multi-agent安全配置文档https://docs.openclaw.ai/gateway/securityClawHub 技能市场https://clawhub.com无影灵构帮助中心https://docs-lincore.wuying.com阿里云 Coding Planhttps://help.aliyun.com/zh/model-studio/openclaw-coding-planOpenClaw 社区 Discordhttps://discord.com/invite/clawd -

![使用 docker 快速安装 Home Assistant]() 使用 docker 快速安装 Home Assistant 之前我们有介绍过 Home Assistant 。我们安装 Home Assistant 最简单的方式是使用 docker,一个命令搞定:docker run -d \ --name=home_assistant \ -e TZ="Asia/Shanghai" \ -v hass_config:/config \ -v /dev/bus/usb:/dev/bus/usb \ -v /var/run/dbus:/var/run/dbus \ --net=host \ --privileged \ --restart unless-stopped \ homeassistant/home-assistant:stable安装好之后,访问 http://[IP]:8123 就能看到界面啦。

使用 docker 快速安装 Home Assistant 之前我们有介绍过 Home Assistant 。我们安装 Home Assistant 最简单的方式是使用 docker,一个命令搞定:docker run -d \ --name=home_assistant \ -e TZ="Asia/Shanghai" \ -v hass_config:/config \ -v /dev/bus/usb:/dev/bus/usb \ -v /var/run/dbus:/var/run/dbus \ --net=host \ --privileged \ --restart unless-stopped \ homeassistant/home-assistant:stable安装好之后,访问 http://[IP]:8123 就能看到界面啦。 -

Linux查看磁盘空间使用状态以及docker空间清理 查看Linux系统的文件系统使用情况 df -h 查询各个目录或者文件占用空间的情况 du -sh *|sort -h du -h --max-depth=1 查看docker磁盘使用情况 du -hs /var/lib/docker/ 查看Docker的磁盘使用情况 docker system df 清理磁盘,删除关闭的容器、无用的数据卷和网络,以及dangling镜像(即无tag的镜像) docker system prune 清理得更加彻底,可以将没有容器使用Docker镜像都删掉。注意,这两个命令会把你暂时关闭的容器,以及暂时没有用到的Docker镜像都删掉 docker system prune -a 清理容器日志 docker inspect <容器名> | grep LogPath | cut -d ':' -f 2 | cut -d ',' -f 1 | xargs echo | xargs truncate -s 0 Job #!/bin/sh ls -lh $(find /var/lib/docker/containers/ -name *-json.log) echo "==================== start clean docker containers logs ==========================" logs=$(find /var/lib/docker/containers/ -name *-json.log) for log in $logs do echo "clean logs : $log" cat /dev/null > $log done echo "==================== end clean docker containers logs ==========================" ls -lh $(find /var/lib/docker/containers/ -name *-json.log) 限制Docker日志大小配置# 编辑docker配置文件 nano /etc/docker/daemon.json # 加入如下配置,限制每个容器最大日志大小50M,最大文件数1 { "log-driver":"json-file", "log-opts": {"max-size":"50m", "max-file":"1"} } # 重启docker服务 # 查看overlayer2对应容器 ```bash for container in $(docker ps --all --quiet --format '{{ .Names }}'); do echo "$(docker inspect $container --format '{{.GraphDriver.Data.MergedDir }}' | \ grep -Po '^.+?(?=/merged)' ) = $container" done ``` systemctl daemon-reload systemctl restart docker

-

Docker部署ngrok反向代理 dhso/ngrok Another ngrok client by python. start ngrokd servicedocker run -d \ --name ngrokd \ --net=host \ --restart=always \ sequenceiq/ngrokd:latest \ -httpAddr=:4480 \ -httpsAddr=:4444 \ -domain=xxx.com Please remember to modify your domain name resolution A | *.xxx.com | xxx.xxx.xxx.xxx run ngrok clientdocker run -d \ --name ngrok \ --net=host \ --restart=always \ -e NGROK_HOST=xxx.com|xxx.xxx.xxx.xxx \ -e NGROK_PORT=4443 \ -e NGROK_BUFSIZE=8192 \ -v ngrok_app:/app \ dhso/ngrok:latest config ENV VAL NGROK_HOST your ngrokd domain or IP NGROK_PORT default 4443 NGROK_BUFSIZE default 8192 in ngrok container cd /app edit ngrok.json save ngrok.json and restart ngrok container ngrok.json example[{ "protocol": "http", "hostname": "www.xxx.com", "subdomain": "", "rport": 0, "lhost": "127.0.0.1", "lport": 80 },{ "protocol": "http", "hostname": "", "subdomain": "www", "rport": 0, "lhost": "127.0.0.1", "lport": 80 },{ "protocol": "tcp", "hostname": "", "subdomain": "", "rport": 2222, "lhost": "127.0.0.1", "lport": 22 }] Hub地址 https://hub.docker.com/r/dhso/ngrok Github地址 https://github.com/dhso/ngrok-python

-

Docker Swarm需在iptables放行的端口 #TCP端口2376 用于安全的Docker客户端通信iptables -I INPUT -p tcp --dport 2376 -j ACCEPT #TCP端口2377 集群管理端口,只需要在管理器节点上打开 iptables -I INPUT -p tcp --dport 2377 -j ACCEPT #TCP与UDP端口7946 节点之间通讯端口(容器网络发现) iptables -I INPUT -p tcp --dport 7946 -j ACCEPT iptables -I INPUT -p udp --dport 7946 -j ACCEPT #UDP端口4789 overlay网络通讯端口(容器入口网络) iptables -I INPUT -p udp --dport 4789 -j ACCEPT #portainer 的endpoint端口 iptables -I INPUT -p tcp --dport 9001 -j ACCEPT

-

Docker registry and npm registry docker:docker-proxy-163: https://hub-mirror.c.163.com docker-proxy-dockerhub: https://registry-1.docker.io docker-proxy-ustc: https://docker.mirrors.ustc.edu.cn npm:npm-proxy-cnpm: https://registry.npm.taobao.org npm-proxy-npmjs: https://registry.npmjs.org .npmrc:registry=https://registry.npm.taobao.org sass_binary_site=http://npm.taobao.org/mirrors/node-sass electron_mirror=http://npm.taobao.org/mirrors/electron/

-

![Docker部署JupyterHub并开启Lab跟Github授权]() Docker部署JupyterHub并开启Lab跟Github授权 本文介绍了如何使用Docker来运行JupyterHub,并使用Github来授权登录,登录后JupyterHub会创建单用户的docker容器,并自定义用户docker镜像开启Lab功能。拉取相关镜像docker pull jupyterhub/jupyterhub docker pull jupyterhub/singleuser:0.9 创建jupyterhub_network网络docker network create --driver bridge jupyterhub_network 创建jupyterhub的volumesudo mkdir -pv /data/jupyterhub sudo chown -R root /data/jupyterhub sudo chmod -R 777 /data/jupyterhub 复制jupyterhub_config.py到volumecp jupyterhub_config.py /data/jupyterhub/jupyterhub_config.py jupyterhub_config.py# Configuration file for Jupyter Hub c = get_config() # spawn with Docker c.JupyterHub.spawner_class = 'dockerspawner.DockerSpawner' # Spawn containers from this image c.DockerSpawner.image = 'dhso/jupyter_lab_singleuser:latest' # JupyterHub requires a single-user instance of the Notebook server, so we # default to using the `start-singleuser.sh` script included in the # jupyter/docker-stacks *-notebook images as the Docker run command when # spawning containers. Optionally, you can override the Docker run command # using the DOCKER_SPAWN_CMD environment variable. c.DockerSpawner.extra_create_kwargs.update({ 'command': "start-singleuser.sh --SingleUserNotebookApp.default_url=/lab" }) # Connect containers to this Docker network network_name = 'jupyterhub_network' c.DockerSpawner.use_internal_ip = True c.DockerSpawner.network_name = network_name # Pass the network name as argument to spawned containers c.DockerSpawner.extra_host_config = { 'network_mode': network_name } # Explicitly set notebook directory because we'll be mounting a host volume to # it. Most jupyter/docker-stacks *-notebook images run the Notebook server as # user `jovyan`, and set the notebook directory to `/home/jovyan/work`. # We follow the same convention. notebook_dir = '/home/jovyan/work' c.DockerSpawner.notebook_dir = notebook_dir # Mount the real user's Docker volume on the host to the notebook user's # notebook directory in the container c.DockerSpawner.volumes = { 'jupyterhub-user-{username}': notebook_dir } # volume_driver is no longer a keyword argument to create_container() # c.DockerSpawner.extra_create_kwargs.update({ 'volume_driver': 'local' }) # Remove containers once they are stopped c.DockerSpawner.remove_containers = True # For debugging arguments passed to spawned containers c.DockerSpawner.debug = True # The docker instances need access to the Hub, so the default loopback port doesn't work: # from jupyter_client.localinterfaces import public_ips # c.JupyterHub.hub_ip = public_ips()[0] c.JupyterHub.hub_ip = 'jupyterhub' # IP Configurations c.JupyterHub.ip = '0.0.0.0' c.JupyterHub.port = 80 # OAuth with GitHub c.JupyterHub.authenticator_class = 'oauthenticator.GitHubOAuthenticator' c.Authenticator.whitelist = whitelist = set() c.Authenticator.admin_users = admin = set() import os os.environ['GITHUB_CLIENT_ID'] = '你自己的GITHUB_CLIENT_ID' os.environ['GITHUB_CLIENT_SECRET'] = '你自己的GITHUB_CLIENT_SECRET' os.environ['OAUTH_CALLBACK_URL'] = '你自己的OAUTH_CALLBACK_URL,类似于http://xxx/hub/oauth_callback' join = os.path.join here = os.path.dirname(__file__) with open(join(here, 'userlist')) as f: for line in f: if not line: continue parts = line.split() name = parts[0] whitelist.add(name) if len(parts) > 1 and parts[1] == 'admin': admin.add(name) c.GitHubOAuthenticator.oauth_callback_url = os.environ['OAUTH_CALLBACK_URL'] 复制userlist到volume,userlist存储了用户名以及权限cp userlist /data/jupyterhub/userlist dhso admin wengel 编译dockerfiledocker build -t dhso/jupyterhub . DockerfileARG BASE_IMAGE=jupyterhub/jupyterhub FROM ${BASE_IMAGE} RUN pip install --no-cache --upgrade jupyter RUN pip install --no-cache dockerspawner RUN pip install --no-cache oauthenticator EXPOSE 80 编译单用户jupyter的dockerfile,并开启labdocker build -t dhso/jupyter_lab_singleuser . DockerfileARG BASE_IMAGE=jupyterhub/singleuser FROM ${BASE_IMAGE} # 加速 # RUN conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/ # RUN conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/ # RUN conda config --set show_channel_urls yes # Install jupyterlab # RUN conda install -c conda-forge jupyterlab RUN pip install jupyterlab RUN jupyter serverextension enable --py jupyterlab --sys-prefix USER jovyan 创建jupyterhub的docker容器,映射80端口docker run -d --name jupyterhub -p 80:80 \ --network jupyterhub_network \ -v /var/run/docker.sock:/var/run/docker.sock \ -v /data/jupyterhub:/srv/jupyterhub dhso/jupyterhub:latest 访问localhost就能看到界面

Docker部署JupyterHub并开启Lab跟Github授权 本文介绍了如何使用Docker来运行JupyterHub,并使用Github来授权登录,登录后JupyterHub会创建单用户的docker容器,并自定义用户docker镜像开启Lab功能。拉取相关镜像docker pull jupyterhub/jupyterhub docker pull jupyterhub/singleuser:0.9 创建jupyterhub_network网络docker network create --driver bridge jupyterhub_network 创建jupyterhub的volumesudo mkdir -pv /data/jupyterhub sudo chown -R root /data/jupyterhub sudo chmod -R 777 /data/jupyterhub 复制jupyterhub_config.py到volumecp jupyterhub_config.py /data/jupyterhub/jupyterhub_config.py jupyterhub_config.py# Configuration file for Jupyter Hub c = get_config() # spawn with Docker c.JupyterHub.spawner_class = 'dockerspawner.DockerSpawner' # Spawn containers from this image c.DockerSpawner.image = 'dhso/jupyter_lab_singleuser:latest' # JupyterHub requires a single-user instance of the Notebook server, so we # default to using the `start-singleuser.sh` script included in the # jupyter/docker-stacks *-notebook images as the Docker run command when # spawning containers. Optionally, you can override the Docker run command # using the DOCKER_SPAWN_CMD environment variable. c.DockerSpawner.extra_create_kwargs.update({ 'command': "start-singleuser.sh --SingleUserNotebookApp.default_url=/lab" }) # Connect containers to this Docker network network_name = 'jupyterhub_network' c.DockerSpawner.use_internal_ip = True c.DockerSpawner.network_name = network_name # Pass the network name as argument to spawned containers c.DockerSpawner.extra_host_config = { 'network_mode': network_name } # Explicitly set notebook directory because we'll be mounting a host volume to # it. Most jupyter/docker-stacks *-notebook images run the Notebook server as # user `jovyan`, and set the notebook directory to `/home/jovyan/work`. # We follow the same convention. notebook_dir = '/home/jovyan/work' c.DockerSpawner.notebook_dir = notebook_dir # Mount the real user's Docker volume on the host to the notebook user's # notebook directory in the container c.DockerSpawner.volumes = { 'jupyterhub-user-{username}': notebook_dir } # volume_driver is no longer a keyword argument to create_container() # c.DockerSpawner.extra_create_kwargs.update({ 'volume_driver': 'local' }) # Remove containers once they are stopped c.DockerSpawner.remove_containers = True # For debugging arguments passed to spawned containers c.DockerSpawner.debug = True # The docker instances need access to the Hub, so the default loopback port doesn't work: # from jupyter_client.localinterfaces import public_ips # c.JupyterHub.hub_ip = public_ips()[0] c.JupyterHub.hub_ip = 'jupyterhub' # IP Configurations c.JupyterHub.ip = '0.0.0.0' c.JupyterHub.port = 80 # OAuth with GitHub c.JupyterHub.authenticator_class = 'oauthenticator.GitHubOAuthenticator' c.Authenticator.whitelist = whitelist = set() c.Authenticator.admin_users = admin = set() import os os.environ['GITHUB_CLIENT_ID'] = '你自己的GITHUB_CLIENT_ID' os.environ['GITHUB_CLIENT_SECRET'] = '你自己的GITHUB_CLIENT_SECRET' os.environ['OAUTH_CALLBACK_URL'] = '你自己的OAUTH_CALLBACK_URL,类似于http://xxx/hub/oauth_callback' join = os.path.join here = os.path.dirname(__file__) with open(join(here, 'userlist')) as f: for line in f: if not line: continue parts = line.split() name = parts[0] whitelist.add(name) if len(parts) > 1 and parts[1] == 'admin': admin.add(name) c.GitHubOAuthenticator.oauth_callback_url = os.environ['OAUTH_CALLBACK_URL'] 复制userlist到volume,userlist存储了用户名以及权限cp userlist /data/jupyterhub/userlist dhso admin wengel 编译dockerfiledocker build -t dhso/jupyterhub . DockerfileARG BASE_IMAGE=jupyterhub/jupyterhub FROM ${BASE_IMAGE} RUN pip install --no-cache --upgrade jupyter RUN pip install --no-cache dockerspawner RUN pip install --no-cache oauthenticator EXPOSE 80 编译单用户jupyter的dockerfile,并开启labdocker build -t dhso/jupyter_lab_singleuser . DockerfileARG BASE_IMAGE=jupyterhub/singleuser FROM ${BASE_IMAGE} # 加速 # RUN conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/ # RUN conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/ # RUN conda config --set show_channel_urls yes # Install jupyterlab # RUN conda install -c conda-forge jupyterlab RUN pip install jupyterlab RUN jupyter serverextension enable --py jupyterlab --sys-prefix USER jovyan 创建jupyterhub的docker容器,映射80端口docker run -d --name jupyterhub -p 80:80 \ --network jupyterhub_network \ -v /var/run/docker.sock:/var/run/docker.sock \ -v /data/jupyterhub:/srv/jupyterhub dhso/jupyterhub:latest 访问localhost就能看到界面 -

swarm 安装小记 ssh root@40.73.96.111ssh root@40.73.99.31 ssh root@40.73.96.219 docker swarm join --token SWMTKN-1-2g1m3acikt9jfj1mnhyfqyta2e4w58we0lapdyri8i8aec3ndz-e1pztefxdo6nxu85n493y2g5p 172.16.5.5:2377 ### docker ### yum remove docker docker-client docker-client-latest docker-common \ docker-latest docker-latest-logrotate docker-logrotate \ docker-selinux docker-engine-selinux docker-engine yum install -y yum-utils device-mapper-persistent-data lvm2 yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo yum install docker-ce systemctl start docker systemctl enable docker nano /etc/docker/daemon.json { "registry-mirrors": ["https://registry.docker-cn.com"], "insecure-registries":["172.16.5.5:9060"] } systemctl daemon-reload systemctl restart docker.service ### swarm ### 初始化swarm manager并制定网卡地址 docker swarm init --advertise-addr 192.168.10.117 强制删除集群,如果是manager,需要加–force docker swarm leave --force docker node rm docker-118 查看swarm worker的连接令牌 docker swarm join-token worker 查看swarm manager的连接令牌 docker swarm join-token manager 使旧令牌无效并生成新令牌 docker swarm join-token --rotate 加入docker swarm集群 docker swarm join --token SWMTKN-1-5d2ipwo8jqdsiesv6ixze20w2toclys76gyu4zdoiaf038voxj-8sbxe79rx5qt14ol14gxxa3wf 192.168.10.117:2377 查看集群中的节点 docker node ls 查看集群中节点信息 docker node inspect docker-117 --pretty 调度程序可以将任务分配给节点 docker node update --availability active docker-118 调度程序不向节点分配新任务,但是现有任务仍然保持运行 docker node update --availability pause docker-118 调度程序不会将新任务分配给节点。调度程序关闭任何现有任务并在可用节点上安排它们 docker node update --availability drain docker-118 添加节点标签 docker node update --label-add label1 --label-add bar=label2 docker-117 docker node update --label-rm label1 docker-117 将节点升级为manager docker node promote docker-118 将节点降级为worker docker node demote docker-118 查看服务列表 docker service ls 查看服务的具体信息 docker service ps redis 创建一个不定义name,不定义replicas的服务 docker service create nginx 创建一个指定name的服务 docker service create --name my_web nginx 创建一个指定name、run cmd的服务 docker service create --name helloworld alping ping docker.com 创建一个指定name、version、run cmd的服务 docker service create --name helloworld alping:3.6 ping docker.com 创建一个指定name、port、replicas的服务 docker service create --name my_web --replicas 3 -p 80:80 nginx 为指定的服务更新一个端口 docker service update --publish-add 80:80 my_web 为指定的服务删除一个端口 docker service update --publish-rm 80:80 my_web 将redis:3.0.6更新至redis:3.0.7 docker service update --image redis:3.0.7 redis 配置运行环境,指定工作目录及环境变量 docker service create --name helloworld --env MYVAR=myvalue --workdir /tmp --user my_user alping ping docker.com 创建一个helloworld的服务 docker service create --name helloworld alpine ping docker.com 更新helloworld服务的运行命令 docker service update --args “ping www.baidu.com” helloworld 删除一个服务 docker service rm my_web 在每个群组节点上运行web服务 docker service create --name tomcat --mode global --publish mode=host,target=8080,published=8080 tomcat:latest 创建一个overlay网络 docker network create --driver overlay my_network docker network create --driver overlay --subnet 10.10.10.0/24 --gateway 10.10.10.1 my-network 创建服务并将网络添加至该服务 docker service create --name test --replicas 3 --network my-network redis 删除群组网络 docker service update --network-rm my-network test 更新群组网络 docker service update --network-add my_network test 创建群组并配置cpu和内存 docker service create --name my_nginx --reserve-cpu 2 --reserve-memory 512m --replicas 3 nginx 更改所分配的cpu和内存 docker service update --reserve-cpu 1 --reserve-memory 256m my_nginx 指定每次更新的容器数量 --update-parallelism 指定容器更新的间隔 --update-delay 定义容器启动后监控失败的持续时间 --update-monitor 定义容器失败的百分比 --update-max-failure-ratio 定义容器启动失败之后所执行的动作 --update-failure-action 创建一个服务并运行3个副本,同步延迟10秒,10%任务失败则暂停 docker service create --name mysql_5_6_36 --replicas 3 --update-delay 10s --update-parallelism 1 --update-monitor 30s --update-failure-action pause --update-max-failure-ratio 0.1 -e MYSQL_ROOT_PASSWORD=123456 mysql:5.6.36 回滚至之前版本 docker service update --rollback mysql 自动回滚 docker service create --name redis --replicas 6 --rollback-parallelism 2 --rollback-monitor 20s --rollback-max-failure-ratio .2 redis:latest 创建服务并将目录挂在至container中 docker service create --name mysql --publish 3306:3306 --mount type=bind,src=/data/mysql,dst=/var/lib/mysql --replicas 3 -e MYSQL_ROOT_PASSWORD=123456 mysql:5.6.36 查看配置 docker config ls 查看配置详细信息 docker config inspect mysql 删除配置 docker config rm mysql ### portainer ### docker volume create portainer_data docker service create \ --name portainer \ --publish 9000:9000 \ --replicas=1 \ --constraint 'node.role == manager' \ --mount type=bind,src=//var/run/docker.sock,dst=/var/run/docker.sock \ --mount type=volume,src=portainer_data,dst=/data \ portainer/portainer \ -H unix:///var/run/docker.sock ### gitlab ### docker volume create --name gitlab_config docker volume create --name gitlab_logs docker volume create --name gitlab_data docker service create --name swarm_gitlab\ --publish 5002:443 --publish 5003:80 --publish 5004:22 \ --replicas 1 \ --mount type=volume,source=gitlab_config,destination=/etc/gitlab \ --mount type=volume,source=gitlab_logs,destination=/var/log/gitlab \ --mount type=volume,source=gitlab_data,destination=/var/opt/gitlab \ --constraint 'node.labels.type == gitlab_node' \ gitlab/gitlab-ce:latest ### mysql ### mysql: image: mysql:5.6.40 environment: # 设置时区为Asia/Shanghai - TZ=Asia/Shanghai - MYSQL_ROOT_PASSWORD=admin@1234 volumes: - mysql:/var/lib/mysql deploy: replicas: 1 restart_policy: condition: any resources: limits: cpus: "0.2" memory: 512M update_config: parallelism: 1 # 每次更新1个副本 delay: 5s # 每次更新间隔 monitor: 10s # 单次更新多长时间后没有结束则判定更新失败 max_failure_ratio: 0.1 # 更新时能容忍的最大失败率 order: start-first # 更新顺序为新任务启动优先 ports: - 3306:3306 networks: - myswarm-net networks: myswarm-net: external: true version: "3.2" services: web: image: 'gitlab/gitlab-ce:latest' restart: always environment: GITLAB_OMNIBUS_CONFIG: | external_url 'http://40.73.96.111:9030' ports: - '9030:80' - '9031:443' - '9032:22' volumes: - '/var/lib/docker/volumes/gitlab_config/_data:/etc/gitlab' - '/var/lib/docker/volumes/gitlab_logs/_data:/var/log/gitlab' - '/var/lib/docker/volumes/gitlab_data/_data:/var/opt/gitlab' # 配置http协议所使用的访问地址 external_url 'http://40.73.96.111:9030' # 配置ssh协议所使用的访问地址和端口 gitlab_rails['gitlab_ssh_host'] = '40.73.96.111' gitlab_rails['gitlab_shell_ssh_port'] = 9032 nginx['listen_port'] = 80 # 这里以新浪的邮箱为例配置smtp服务器 gitlab_rails['smtp_enable'] = true gitlab_rails['smtp_address'] = "smtp.sina.com" gitlab_rails['smtp_port'] = 25 gitlab_rails['smtp_user_name'] = "name4mail" gitlab_rails['smtp_password'] = "passwd4mail" gitlab_rails['smtp_domain'] = "sina.com" gitlab_rails['smtp_authentication'] = :login gitlab_rails['smtp_enable_starttls_auto'] = true # 还有个需要注意的地方是指定发送邮件所用的邮箱,这个要和上面配置的邮箱一致 gitlab_rails['gitlab_email_from'] = 'name4mail@sina.com' $ curl -L https://portainer.io/download/portainer-agent-stack.yml -o portainer-agent-stack.yml $ docker stack deploy --compose-file=portainer-agent-stack.yml portainer //remote use mysql; select host, user, authentication_string, plugin from user; GRANT ALL ON *.* TO 'root'@'%'; flush privileges; //mysql8 ALTER USER 'root'@'localhost' IDENTIFIED BY 'admin@1234' PASSWORD EXPIRE NEVER; ALTER USER 'root'@'%' IDENTIFIED WITH mysql_native_password BY 'admin@1234'; FLUSH PRIVILEGES; ace-center/target/ace-center.jar ace-center/target/ docker rm -f ace-center sleep 1 docker service create --name ace-center --publish 6010:8761 --replicas 1 -e JAR_PATH=/tmp/ace-center.jar dhso/springboot-app:1.0 FROM java:8 VOLUME /tmp ADD ace-center/target/ace-center.jar app.jar RUN bash -c 'touch /app.jar' ENTRYPOINT ["java","-Djava.security.egd=file:/dev/./urandom","-jar","/app.jar"] docker rm -f ace-center sleep 1 docker rmi -f dhso/ace-center sleep 1 cd /tmp/ace-center docker build -t dhso/ace-center . sleep 1 docker service create --name ace-center --publish 6010:8761 --replicas 1 dhso/ace-center docker service create --name ace-center --publish 6010:8080 --replicas 1 -e JAR_PATH=/tmp/ace-center.jar dhso/springboot-app:1.0 ## ace-center target/ace-center.jar,src/main/docker/Dockerfile ace-center docker service rm ace-center sleep 1s docker rm -f ace-center sleep 1s docker images|grep 172.16.5.5:9060/ace-center|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-center rm -rf docker mkdir docker cp target/ace-center.jar docker/ace-center.jar cp src/main/docker/Dockerfile docker/Dockerfile cd docker docker build -t 172.16.5.5:9060/ace-center:latest . sleep 1s docker push 172.16.5.5:9060/ace-center:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-center --network ace_network --constraint 'node.labels.type == worker' --publish 6010:8761 --replicas 1 172.16.5.5:9060/ace-center:latest ### ace-config ### target/ace-config.jar,src/main/docker/Dockerfile ace-config docker service rm ace-config sleep 1s docker rm -f ace-config sleep 1s docker images|grep 172.16.5.5:9060/ace-config|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-config rm -rf docker mkdir docker cp target/ace-config.jar docker/ace-config.jar cp src/main/docker/Dockerfile docker/Dockerfile cd docker docker build -t 172.16.5.5:9060/ace-config:latest . sleep 1s docker push 172.16.5.5:9060/ace-config:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-config --network ace_network --constraint 'node.labels.type == worker' --publish 6011:8750 --replicas 1 172.16.5.5:9060/ace-config:latest ### ace-auth ### target/ace-auth.jar,src/main/docker/Dockerfile ace-auth docker service rm ace-auth sleep 1s docker rm -f ace-auth sleep 1s docker images|grep 172.16.5.5:9060/ace-auth|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-auth rm -rf docker mkdir docker cp target/ace-auth.jar docker/ace-auth.jar cp src/main/docker/Dockerfile docker/Dockerfile cd docker docker build -t 172.16.5.5:9060/ace-auth:latest . sleep 1s docker push 172.16.5.5:9060/ace-auth:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-auth --network ace_network --constraint 'node.labels.type == worker' --publish 6013:9777 --replicas 1 172.16.5.5:9060/ace-auth:latest ### ace-admin ### target/ace-admin.jar,src/main/docker/Dockerfile,src/main/docker/wait-for-it.sh ace-admin docker service rm ace-admin sleep 1s docker rm -f ace-admin sleep 1s docker images|grep 172.16.5.5:9060/ace-admin|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-admin rm -rf docker mkdir docker cp target/ace-admin.jar docker/ace-admin.jar cp src/main/docker/Dockerfile docker/Dockerfile cp src/main/docker/wait-for-it.sh docker/wait-for-it.sh cd docker docker build -t 172.16.5.5:9060/ace-admin:latest . sleep 1s docker push 172.16.5.5:9060/ace-admin:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-admin --network ace_network --constraint 'node.labels.type == worker' --publish 6014:8762 --replicas 1 172.16.5.5:9060/ace-admin:latest ### ace-gate ### target/ace-gate.jar,src/main/docker/Dockerfile,src/main/docker/wait-for-it.sh ace-gate docker service rm ace-gate sleep 1s docker rm -f ace-gate sleep 1s docker images|grep 172.16.5.5:9060/ace-gate|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-gate rm -rf docker mkdir docker cp target/ace-gate.jar docker/ace-gate.jar cp src/main/docker/Dockerfile docker/Dockerfile cp src/main/docker/wait-for-it.sh docker/wait-for-it.sh cd docker docker build -t 172.16.5.5:9060/ace-gate:latest . sleep 1s docker push 172.16.5.5:9060/ace-gate:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-gate --network ace_network --constraint 'node.labels.type == worker' --publish 6015:8765 --replicas 1 172.16.5.5:9060/ace-gate:latest ### ace-dict ### target/ace-dict.jar,src/main/docker/Dockerfile,src/main/docker/wait-for-it.sh ace-dict docker service rm ace-dict sleep 1s docker rm -f ace-dict sleep 1s docker images|grep 172.16.5.5:9060/ace-dict|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-dict rm -rf docker mkdir docker cp target/ace-dict.jar docker/ace-dict.jar cp src/main/docker/Dockerfile docker/Dockerfile cp src/main/docker/wait-for-it.sh docker/wait-for-it.sh cd docker docker build -t 172.16.5.5:9060/ace-dict:latest . sleep 1s docker push 172.16.5.5:9060/ace-dict:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-dict --network ace_network --constraint 'node.labels.type == worker' --publish 6016:9999 --replicas 1 172.16.5.5:9060/ace-dict:latest ### ace-ui ### FROM node:8-alpine run mkdir webapp add . ./webapp run npm config set registry https://registry.npm.taobao.org run npm install -g http-server WORKDIR ./webapp cmd http-server -p 9527 EXPOSE 9527 ========== dist/*,Dockerfile ace-ui docker service rm ace-ui sleep 1s docker rm -f ace-ui sleep 1s docker images|grep 172.16.5.5:9060/ace-ui|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-ui cp Dockerfile dist/Dockerfile cd dist docker build -t 172.16.5.5:9060/ace-ui:latest . sleep 1s docker push 172.16.5.5:9060/ace-ui:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-ui --network ace_network --constraint 'node.labels.type == worker' --publish 6012:9527 --replicas 1 172.16.5.5:9060/ace-ui:latest ### ace-monitor ### target/ace-monitor.jar,src/main/docker/Dockerfile ace-monitor docker service rm ace-monitor sleep 1s docker rm -f ace-monitor sleep 1s docker images|grep 172.16.5.5:9060/ace-monitor|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-monitor rm -rf docker mkdir docker cp target/ace-monitor.jar docker/ace-monitor.jar cp src/main/docker/Dockerfile docker/Dockerfile cd docker docker build -t 172.16.5.5:9060/ace-monitor:latest . sleep 1s docker push 172.16.5.5:9060/ace-monitor:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-monitor --network ace_network --constraint 'node.labels.type == worker' --publish 6017:8764 --replicas 1 172.16.5.5:9060/ace-monitor:latest ### ace-trace ### target/ace-trace.jar,src/main/docker/Dockerfile ace-trace docker service rm ace-trace sleep 1s docker rm -f ace-trace sleep 1s docker images|grep 172.16.5.5:9060/ace-trace|awk '{print $3}'|xargs docker rmi -f sleep 1s cd /tmp/ace-trace rm -rf docker mkdir docker cp target/ace-trace.jar docker/ace-trace.jar cp src/main/docker/Dockerfile docker/Dockerfile cd docker docker build -t 172.16.5.5:9060/ace-trace:latest . sleep 1s docker push 172.16.5.5:9060/ace-trace:latest sleep 1s docker network create --driver overlay --subnet 10.222.0.0/16 ace_network sleep 1s docker service create --name ace-trace --network ace_network --constraint 'node.labels.type == worker' --publish 6018:9411 --replicas 1 172.16.5.5:9060/ace-trace:latest docker service create --name redis_01 --mount type=volume,src=redis_data,dst=/data \ --network ace_network --constraint 'node.labels.type == manager' --publish 9050:6379 --replicas 1 redis:latest docker service create --name mysql_01 --mount type=volume,src=mysql_data,dst=/var/lib/mysql \ --env MYSQL_ROOT_PASSWORD=admin@1234 --network ace_network \ --constraint 'node.labels.type == manager' --publish 9051:3306 --replicas 1 mysql:5.6 /usr/bin/mysqladmin -u root password 'admin@1234' docker service create --name rabbitmq_01 --mount type=volume,src=rabbitmq,dst=/var/lib/rabbitmq \ --network ace_network --constraint 'node.labels.type == manager' \ --publish 9052:5671 --publish 9053:5672 --publish 9054:15672 --replicas 1 rabbitmq:latest FROM node:8-alpine run mkdir webapp add . ./webapp run npm config set registry https://registry.npm.taobao.org run npm install -g http-server WORKDIR ./webapp cmd http-server -p 9527 EXPOSE 9527 yum install -y epel-release yum install -y htop

-

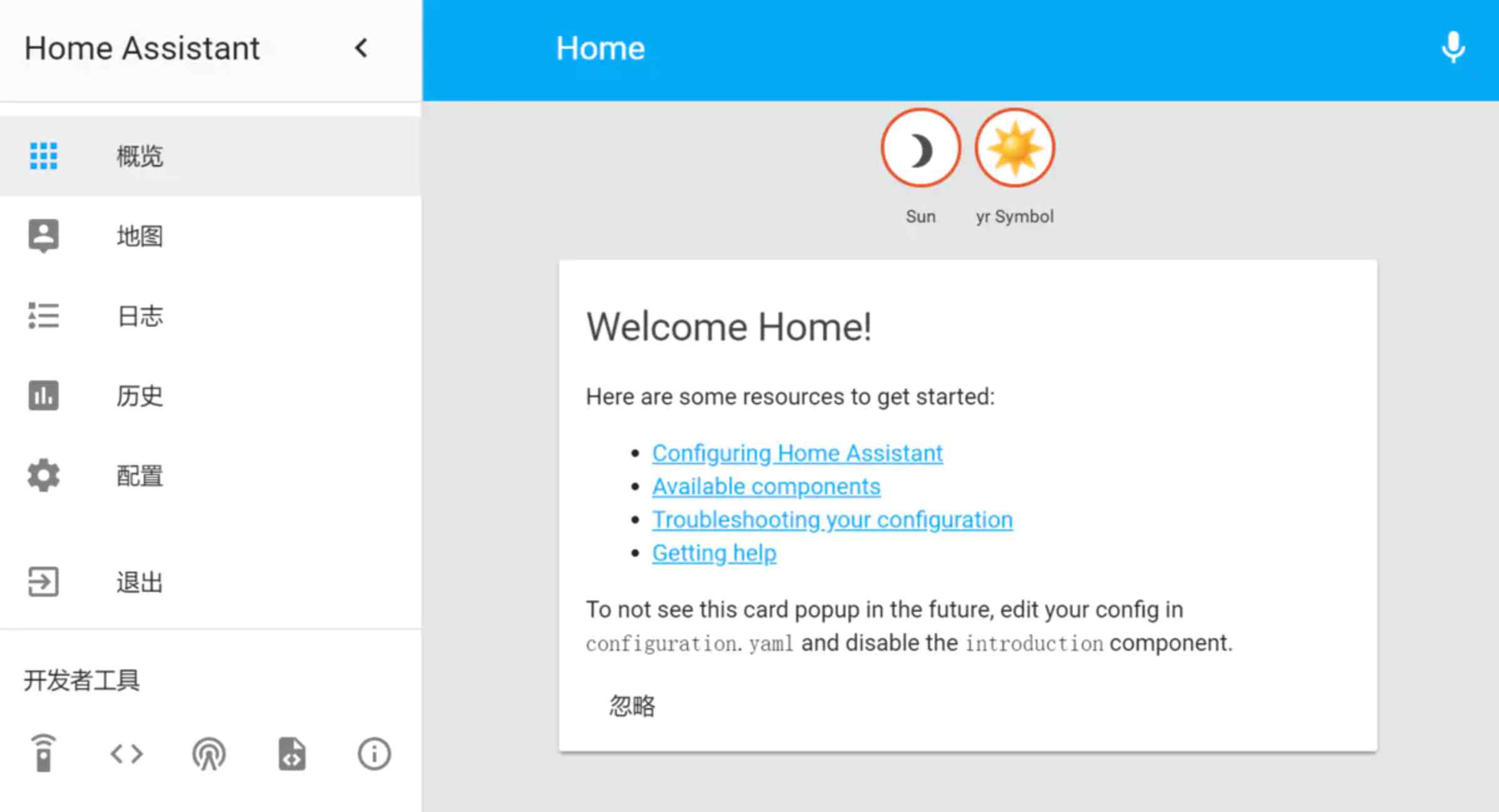

![在docker上部署mongodb分布式分片副本集群]() 在docker上部署mongodb分布式分片副本集群 使用 Sharded cluster 时,通常是要解决如下2个问题 存储容量受单机限制,即磁盘资源遭遇瓶颈。 读写能力受单机限制(读能力也可以在复制集里加 secondary 节点来扩展),可能是 CPU、内存或者网卡等资源遭遇瓶颈,导致读写能力无法扩展。 Sharded模式图创建3个配置服务(configsvr)docker run -d -p 40001:40001 --privileged=true -v cnf40001:/data/db --name cnf_c1 mongo:latest --configsvr --port 40001 --dbpath /data/db --replSet crsdocker run -d -p 40002:40002 --privileged=true -v cnf40002:/data/db --name cnf_c2 mongo:latest --configsvr --port 40002 --dbpath /data/db --replSet crsdocker run -d -p 40003:40003 --privileged=true -v cnf40003:/data/db --name cnf_c3 mongo:latest --configsvr --port 40003 --dbpath /data/db --replSet crs任意选择crs分片的一个副本mongo --port 40001切换数据库use admin写配置文件config = {_id:"crs", configsvr:true, members:[ {_id:0,host:"192.168.31.82:40001"}, {_id:1,host:"192.168.31.82:40002"}, {_id:2,host:"192.168.31.82:40003"} ] }初始化副本集配置rs.initiate(config)如果已经初始化过,使用下面的强制配置rs.reconfig(config,{force:true})查看副本集状态rs.status()创建2个分片服务(shardsvr),每个shardsvr包含3个副本,其中1个主节点,1个从节点,1个仲裁节点。docker run -d -p 20001:20001 --privileged=true -v db20001:/data/db --name rs1_c1 mongo:latest --shardsvr --port 20001 --dbpath /data/db --replSet rs1docker run -d -p 20002:20002 --privileged=true -v db20002:/data/db --name rs1_c2 mongo:latest --shardsvr --port 20002 --dbpath /data/db --replSet rs1docker run -d -p 20003:20003 --privileged=true -v db20003:/data/db --name rs1_c3 mongo:latest --shardsvr --port 20003 --dbpath /data/db --replSet rs1任意选择rs1分片的一个副本mongo --port 20001切换数据库use admin写配置文件config = {_id:"rs1",members:[ {_id:0,host:"192.168.31.82:20001"}, {_id:1,host:"192.168.31.82:20002"}, {_id:2,host:"192.168.31.82:20003",arbiterOnly:true} ] }初始化副本集配置rs.initiate(config)如果已经初始化过,使用下面的强制配置rs.reconfig(config,{force:true})查看副本集状态rs.status()结果rs1:RECOVERING> rs.status() { "set" : "rs1", "date" : ISODate("2016-12-20T09:01:16.108Z"), "myState" : 1, "term" : NumberLong(1), "heartbeatIntervalMillis" : NumberLong(2000), "members" : [ { "_id" : 0, "name" : "192.168.31.82:20001", "health" : 1, "state" : 1, "stateStr" : "PRIMARY", "uptime" : 7799, "optime" : { "ts" : Timestamp(1482224415, 1), "t" : NumberLong(1) }, "optimeDate" : ISODate("2016-12-20T09:00:15Z"), "infoMessage" : "could not find member to sync from", "electionTime" : Timestamp(1482224414, 1), "electionDate" : ISODate("2016-12-20T09:00:14Z"), "configVersion" : 1, "self" : true }, { "_id" : 1, "name" : "192.168.31.82:20002", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 71, "optime" : { "ts" : Timestamp(1482224415, 1), "t" : NumberLong(1) }, "optimeDate" : ISODate("2016-12-20T09:00:15Z"), "lastHeartbeat" : ISODate("2016-12-20T09:01:15.016Z"), "lastHeartbeatRecv" : ISODate("2016-12-20T09:01:15.376Z"), "pingMs" : NumberLong(1), "syncingTo" : "192.168.30.200:20001", "configVersion" : 1 }, { "_id" : 2, "name" : "192.168.31.82:20003", "health" : 1, "state" : 7, "stateStr" : "ARBITER", "uptime" : 71, "lastHeartbeat" : ISODate("2016-12-20T09:01:15.016Z"), "lastHeartbeatRecv" : ISODate("2016-12-20T09:01:11.334Z"), "pingMs" : NumberLong(0), "configVersion" : 1 } ], "ok" : 1 } 创建2个分片服务(shardsvr),每个shardsvr包含3个副本,其中1个主节点,1个从节点,1个仲裁节点。docker run -d -p 30001:30001 --privileged=true -v db30001:/data/db --name rs2_c1 mongo:latest --shardsvr --port 30001 --dbpath /data/db --replSet rs2docker run -d -p 30002:30002 --privileged=true -v db30002:/data/db --name rs2_c2 mongo:latest --shardsvr --port 30002 --dbpath /data/db --replSet rs2docker run -d -p 30003:30003 --privileged=true -v db30003:/data/db --name rs2_c3 mongo:latest --shardsvr --port 30003 --dbpath /data/db --replSet rs2任意选择rs2分片的一个副本mongo --port 30001切换数据库use admin写配置文件config = {_id:"rs2",members:[ {_id:0,host:"192.168.31.82:30001"}, {_id:1,host:"192.168.31.82:30002"}, {_id:2,host:"192.168.31.82:30003",arbiterOnly:true} ] }初始化副本集配置rs.initiate(config)如果已经初始化过,使用下面的强制配置rs.reconfig(config,{force:true})查看副本集状态rs.status()创建2个路由服务(mongos)docker run -d -p 50001:50001 --privileged=true --name ctr50001 mongo:latest mongos --configdb crs/192.168.31.82:40001,192.168.31.82:40002,192.168.31.82:40003 --port 50001 --bind_ip 0.0.0.0docker run -d -p 50002:50002 --privileged=true --name ctr50002 mongo:latest mongos --configdb crs/192.168.31.82:40001,192.168.31.82:40002,192.168.31.82:40003 --port 50002 --bind_ip 0.0.0.0通过mongos添加分片关系到configsvr。选择路由服务mongo --port 50001切换数据库use admin添加sharddb.runCommand({addshard:"rs1/192.168.31.82:20001,192.168.31.82:20002,192.168.31.82:20003"})db.runCommand({addshard:"rs2/192.168.31.82:30001,192.168.31.82:30002,192.168.31.82:30003"})查询结果 [仲裁节点不显示]db.runCommand({listshards:1}){ "shards" : [ { "_id" : "rs1", "host" : "rs1/192.168.31.82:20001,192.168.31.82:20002" }, { "_id" : "rs2", "host" : "rs2/192.168.31.82:30001,192.168.31.82:30002" } ], "ok" : 1 } 测试示例设置数据库、集合分片 [说明:并不是数据库中所有集合都分片,只有设置了shardcollection才分片,因为不是所有的集合都需要分片。]db.runCommand({enablesharding:"mydb"}) db.runCommand({shardcollection:"mydb.person", key:{id:1, company:1}})测试分片use mydb for (i =0; i<10000;i++){ db.person.save({id:i, company:"baidu"})}测试结果db.person.stats()

在docker上部署mongodb分布式分片副本集群 使用 Sharded cluster 时,通常是要解决如下2个问题 存储容量受单机限制,即磁盘资源遭遇瓶颈。 读写能力受单机限制(读能力也可以在复制集里加 secondary 节点来扩展),可能是 CPU、内存或者网卡等资源遭遇瓶颈,导致读写能力无法扩展。 Sharded模式图创建3个配置服务(configsvr)docker run -d -p 40001:40001 --privileged=true -v cnf40001:/data/db --name cnf_c1 mongo:latest --configsvr --port 40001 --dbpath /data/db --replSet crsdocker run -d -p 40002:40002 --privileged=true -v cnf40002:/data/db --name cnf_c2 mongo:latest --configsvr --port 40002 --dbpath /data/db --replSet crsdocker run -d -p 40003:40003 --privileged=true -v cnf40003:/data/db --name cnf_c3 mongo:latest --configsvr --port 40003 --dbpath /data/db --replSet crs任意选择crs分片的一个副本mongo --port 40001切换数据库use admin写配置文件config = {_id:"crs", configsvr:true, members:[ {_id:0,host:"192.168.31.82:40001"}, {_id:1,host:"192.168.31.82:40002"}, {_id:2,host:"192.168.31.82:40003"} ] }初始化副本集配置rs.initiate(config)如果已经初始化过,使用下面的强制配置rs.reconfig(config,{force:true})查看副本集状态rs.status()创建2个分片服务(shardsvr),每个shardsvr包含3个副本,其中1个主节点,1个从节点,1个仲裁节点。docker run -d -p 20001:20001 --privileged=true -v db20001:/data/db --name rs1_c1 mongo:latest --shardsvr --port 20001 --dbpath /data/db --replSet rs1docker run -d -p 20002:20002 --privileged=true -v db20002:/data/db --name rs1_c2 mongo:latest --shardsvr --port 20002 --dbpath /data/db --replSet rs1docker run -d -p 20003:20003 --privileged=true -v db20003:/data/db --name rs1_c3 mongo:latest --shardsvr --port 20003 --dbpath /data/db --replSet rs1任意选择rs1分片的一个副本mongo --port 20001切换数据库use admin写配置文件config = {_id:"rs1",members:[ {_id:0,host:"192.168.31.82:20001"}, {_id:1,host:"192.168.31.82:20002"}, {_id:2,host:"192.168.31.82:20003",arbiterOnly:true} ] }初始化副本集配置rs.initiate(config)如果已经初始化过,使用下面的强制配置rs.reconfig(config,{force:true})查看副本集状态rs.status()结果rs1:RECOVERING> rs.status() { "set" : "rs1", "date" : ISODate("2016-12-20T09:01:16.108Z"), "myState" : 1, "term" : NumberLong(1), "heartbeatIntervalMillis" : NumberLong(2000), "members" : [ { "_id" : 0, "name" : "192.168.31.82:20001", "health" : 1, "state" : 1, "stateStr" : "PRIMARY", "uptime" : 7799, "optime" : { "ts" : Timestamp(1482224415, 1), "t" : NumberLong(1) }, "optimeDate" : ISODate("2016-12-20T09:00:15Z"), "infoMessage" : "could not find member to sync from", "electionTime" : Timestamp(1482224414, 1), "electionDate" : ISODate("2016-12-20T09:00:14Z"), "configVersion" : 1, "self" : true }, { "_id" : 1, "name" : "192.168.31.82:20002", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 71, "optime" : { "ts" : Timestamp(1482224415, 1), "t" : NumberLong(1) }, "optimeDate" : ISODate("2016-12-20T09:00:15Z"), "lastHeartbeat" : ISODate("2016-12-20T09:01:15.016Z"), "lastHeartbeatRecv" : ISODate("2016-12-20T09:01:15.376Z"), "pingMs" : NumberLong(1), "syncingTo" : "192.168.30.200:20001", "configVersion" : 1 }, { "_id" : 2, "name" : "192.168.31.82:20003", "health" : 1, "state" : 7, "stateStr" : "ARBITER", "uptime" : 71, "lastHeartbeat" : ISODate("2016-12-20T09:01:15.016Z"), "lastHeartbeatRecv" : ISODate("2016-12-20T09:01:11.334Z"), "pingMs" : NumberLong(0), "configVersion" : 1 } ], "ok" : 1 } 创建2个分片服务(shardsvr),每个shardsvr包含3个副本,其中1个主节点,1个从节点,1个仲裁节点。docker run -d -p 30001:30001 --privileged=true -v db30001:/data/db --name rs2_c1 mongo:latest --shardsvr --port 30001 --dbpath /data/db --replSet rs2docker run -d -p 30002:30002 --privileged=true -v db30002:/data/db --name rs2_c2 mongo:latest --shardsvr --port 30002 --dbpath /data/db --replSet rs2docker run -d -p 30003:30003 --privileged=true -v db30003:/data/db --name rs2_c3 mongo:latest --shardsvr --port 30003 --dbpath /data/db --replSet rs2任意选择rs2分片的一个副本mongo --port 30001切换数据库use admin写配置文件config = {_id:"rs2",members:[ {_id:0,host:"192.168.31.82:30001"}, {_id:1,host:"192.168.31.82:30002"}, {_id:2,host:"192.168.31.82:30003",arbiterOnly:true} ] }初始化副本集配置rs.initiate(config)如果已经初始化过,使用下面的强制配置rs.reconfig(config,{force:true})查看副本集状态rs.status()创建2个路由服务(mongos)docker run -d -p 50001:50001 --privileged=true --name ctr50001 mongo:latest mongos --configdb crs/192.168.31.82:40001,192.168.31.82:40002,192.168.31.82:40003 --port 50001 --bind_ip 0.0.0.0docker run -d -p 50002:50002 --privileged=true --name ctr50002 mongo:latest mongos --configdb crs/192.168.31.82:40001,192.168.31.82:40002,192.168.31.82:40003 --port 50002 --bind_ip 0.0.0.0通过mongos添加分片关系到configsvr。选择路由服务mongo --port 50001切换数据库use admin添加sharddb.runCommand({addshard:"rs1/192.168.31.82:20001,192.168.31.82:20002,192.168.31.82:20003"})db.runCommand({addshard:"rs2/192.168.31.82:30001,192.168.31.82:30002,192.168.31.82:30003"})查询结果 [仲裁节点不显示]db.runCommand({listshards:1}){ "shards" : [ { "_id" : "rs1", "host" : "rs1/192.168.31.82:20001,192.168.31.82:20002" }, { "_id" : "rs2", "host" : "rs2/192.168.31.82:30001,192.168.31.82:30002" } ], "ok" : 1 } 测试示例设置数据库、集合分片 [说明:并不是数据库中所有集合都分片,只有设置了shardcollection才分片,因为不是所有的集合都需要分片。]db.runCommand({enablesharding:"mydb"}) db.runCommand({shardcollection:"mydb.person", key:{id:1, company:1}})测试分片use mydb for (i =0; i<10000;i++){ db.person.save({id:i, company:"baidu"})}测试结果db.person.stats() -

一步一步搭建docker+hadoop平台(3) 启动hadoop容器docker run -itd --net=hadoop -p 50070:50070 -p 8088:8088 -p 8020:8020 --name hadoop-master --hostname hadoop-master -v /data:/mnt kiwenlau/hadoop:1.0 进入hadoop容器docker exec -it hadoop-master bash 停止hadoop再启动$HADOOP_HOME/sbin/stop-all.sh $HADOOP_HOME/sbin/start-all.sh

-

一步一步搭建docker+hadoop平台(1) 下载系统文件去centos官网http://101.96.10.38/isoredirect.centos.org/centos/7/isos/x86_64/CentOS-7-x86_64-Minimal-1511.iso下载最小版本的ios文件,并使用UltraISO制作U盘启动盘。制作启动盘使用UltraISO软件制作 文件 > 打开 > 选择iso文件 启动 > 写入硬盘镜像 弹窗 写入方式选择为“USB-HDD+” > 写入 > OK 安装系统 使用U盘启动,启动界面下按上键使第一项install centos7高亮. 看屏幕下方的提示,使用e键或者b键修改启动方式 将 vmlinuz initrd=initrd.img inst.stage2=hd:LABEL=CentOS\x207\x20x86_64 quiet 改为 vmlinuz initrd=initrd.img inst.stage2=hd:/dev/sdb4 quiet 按下ctrl+x键进入安装图形界面 *在分配磁盘的时候,建议不要使用LVM模式,而使用标准模式 配置网络vim /etc/sysconfig/network-scripts/ifcfg-eth0 //有可能是其他的网卡名 编辑内容BOOTPROTO="static" #dhcp改为static ONBOOT="yes" #开机启用本配置 IPADDR=192.168.1.171 #静态IP GATEWAY=192.168.1.1 #默认网关 NETMASK=255.255.255.0 #子网掩码 DNS1=192.168.1.1 #DNS 配置 文件内容HWADDR="00:15:5D:07:F1:02" TYPE="Ethernet" BOOTPROTO="static" #dhcp改为static DEFROUTE="yes" PEERDNS="yes" PEERROUTES="yes" IPV4_FAILURE_FATAL="no" IPV6INIT="yes" IPV6_AUTOCONF="yes" IPV6_DEFROUTE="yes" IPV6_PEERDNS="yes" IPV6_PEERROUTES="yes" IPV6_FAILURE_FATAL="no" NAME="eth0" UUID="bb3a302d-dc46-461a-881e-d46cafd0eb71" ONBOOT="yes" #开机启用本配置 IPADDR=192.168.7.106 #静态IP GATEWAY=192.168.7.1 #默认网关 NETMASK=255.255.255.0 #子网掩码 DNS1=192.168.7.1 #DNS 配置 重启网卡服务service network restart 关机shutdown now # 立即关机 shutdown +2 # 2 min 后关机 shutdown 10:01 # 10:01关机 shutdown +2 "The machine will shutdown" # 2min 后关机,并通知在线者

-

docker+shipyard+hadoop部署方案 相关链接shipyard官网https://shipyard-project.com/docs/deploy/automated/tuicool文章http://www.tuicool.com/articles/FnmeuuNsegmentfault文章https://segmentfault.com/a/1190000002464365github--hadoop-cluster-dockerhttps://github.com/kiwenlau/hadoop-cluster-dockerkiwenlau的博客http://kiwenlau.com/2016/06/12/160612-hadoop-cluster-docker-update/